Creating realistic digital avatars for video games, VR, and education

20 cameras and one SDK

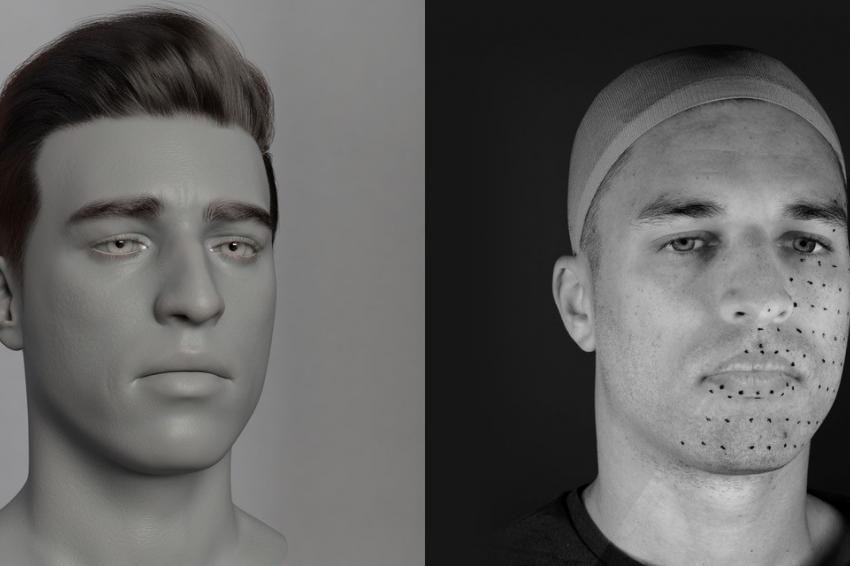

In photogrammetry renders are not created like, for example, Gollum in the Lord of the Rings films, which used a body suit with a 64 control points to replicate Andy Zerkis’s movements, and a facial mask with 964 control points to detect the fine details, to bring the character to life. Instead, they use real images, stitched together to create full 3D manipulatable version based on the millions of fine points in the face and body.

According to Craig Mason of Stasis Media, a digital production agency that specialises in digital human renders, you need full, detailed images to capture something that looks real. This isn’t just due to the way muscles move, but due to micro changes that we detect subconsciously. “One of the important elements to capture is, as your face wrinkles up or scrunches up for a frown, the changes in blood flows across the face.

“Trying to capture that information can really make a big difference to how the animation is perceived. For example, as the face is scrunching up and wrinkling, the blood will pool up around your eyebrows and around your nose area. Having the ability to see and visualize those differences just reinforces the different expressions that we're trying to capture.

“And it’s not just one shot. For an avatar used in a film, commercial or video game, we have to do at minimum, 48, and sometimes over 100 different facial captures to blend between different facial expressions.”

A specialist set up with high quality cameras

There are two approaches that can be used for photogrammetry to create high-resolution renders. Specialists will either use a lot of standard cameras, or as Stasis Media does, use a specialist set up with a precisely controlled turntable that can be moved in steps, with scans taken quickly using a smaller set of cameras, which need to be fixed and have a higher quality than for the first approach.

Stasis Media has recently switched to using Sony; with each shoot using multiple Alpha 7R IV cameras to capture the intricate details of the face; and the smaller RX0 II to capture the body – up to 20 camera devices can be connected via USB. All are controlled and triggered via a combination of hardware and software to ensure the cameras’ firing are synchronous and match the movements of the turntable and the rapid LED flashes, enabling the images to be accurately stitched together.

In addition to this, the company uses a combination of trigger boxes from Esper, combined with custom-made electronics circuits hooked into its control system, which is based on Arduino and Raspberry Pi control boards that send commands to the turntables, flashes, and controls.

Mason says: “When you're trying to do a multi-camera shoot, it's really important that you manage to get the flashes synchronized with the camera exposures. All cameras inherently have a shutter lag from when you press the shutter to the exposure being taken and you have to synchronize and time those things very precisely. The one thing I've found, particularly with the Alpha 7R IVs, is that the synchronization between multiple cameras and the flash exposures was very tight, allowing us to shoot faster. We had four Alpha 7R IVs on a recent shoot aimed at the face and they were all firing very, very consistently.”

“The shoot lasted four, maybe five hours and we had over 99 percent of all the images work first time. What we would normally do when we had our old cameras was to check through everything after each pose, make sure everything was captured correctly. We did that five or six times with Alpha 7R IVs and every sequence was working off the bat. There were no flash exposures missed, there was nothing out of sync. We took them back to the PCs and then we'd run the next 10. We had the confidence to know that the setup was working accurately, every time.”

Creating training material for professional hair stylists

The company has recently created digital humans as part of an awareness campaign for a UK-based Safer-Gambling charity, and also works with Hamburg-based Schwarzkopf Professional (Henkel GmbH), to create training material for their thousands of professional hair stylists and colourists.

One of the biggest problems facing such teams is time: “We were recently in Hamburg, creating 360-degree sequences to show every stage of the cut and coloring process. Models will come in at different stages of their haircuts or coloration and we will then shoot from nine cameras at equidistant angles and scan the model in 360. However, the shoot was being undertaken in tandem with a very high-pressured video and photo collection being run at the same time, so we had around 120 seconds per sequence to shoot 900 images.

No problem to change things on the fly

“When you've got a lot of cameras, it's very important to be able to coordinate the camera settings through an SDK. Once you've got all the cameras rigged up and laser-aligned, all the lenses set to the right focal length, if you're then pressing buttons on a camera over and over, every time you have to change something, that's a lot of downtime. The SDK is imperative to allow you to change things on the fly, or to take reference shots with a wider aperture before doing the scanning. You just can't have someone on the ladder, going up and down the rig, changing every setting because, not only does this take time, but if one camera is knocked out, even by a millimeter, you've got to go through the whole calibration process again. Even taking the cards out of the cameras is a mission.”

Camera technology and 3D rendering engines have reached the point where avatars are becoming almost too lifelike. Mason highlighted a recent shoot where eye texture, and individual eyelash follicles were able to be captured from a full-headshot reference image taken on the Alpha 7R IV rather than via a dedicated camera with 200 mm lens zoomed closely to the eyeball. Mason also highlights the advances on rendering hair: “Digital hair has always been a very difficult thing to do, in real-time in particular. The guys that are here have been working with the digital hair for probably six, seven years now and have really started to nail the visuals of this both in offline renders and real-time renders.”

This has meant companies are requesting digital avatar that, while being unique, cannot be linked to any living person, celebrity or otherwise.

This happened with the commercial we did for the gambling charity, for example. For this we needed to scan a model and get a very high-quality digital avatar that looks as close to real as possible, but was not a real person. So not only was the audio recorded by a third person, but we needed to take a base model and change it slightly: to have different hairstyle, different eyes, different mouth.”

That this can now be done for lower-budget commercials and not just multi-million-dollar films and video games shows just how far the industry has come.

Author

Andrei Marcu is a Business Manager United Kingdom and Ireland at Sony Digital Imaging

Contact

Sony Europe B.V., Zweigniederlassung Deutschland

Kemperplatz 1

10785 Berlin

Germany

+49 30 419 551 000

+49 30 419 552 000