Scanning in Motion

Traditionally, 2D technology was the prevalent approach in the automation of the industrial sector. This has changed with the increasing complexity of applications that need to be automated, and 2D machine vision has gradually been pushed aside by 3D machine vision.

In the era of Industry 4.0, machine vision plays a major role in robotic capabilities and the tasks robots can perform. Though 2D sensing may still be the right choice for certain simple applications that do not require depth information such as robotic barcode reading, label verification, or character recognition, more complex tasks where the robot needs to know the exact X, Y, and Z coordinates of a scene require 3D machine vision. Based on the received 3D data, a robotic system knows the exact position and orientation of an object and can precisely navigate the robot to approach it without colliding with anything, pick it, and place it at another location, or perform some further task. 3D machine vision is widely used in automated bin picking applications, material handling, machine tending, object sorting, inspection, and an infinite number of other tasks.

Early Shortcomings of 3D Scanning of Dynamic Scenes

To imitate the human capability to see, robots need to be able to capture a 3D scene in both its static and dynamic states. However, this is not as easy as it may seem. In fact, traditional 3D area sensing technologies have not been able to capture a moving scene and provide its 3D reconstruction in high quality and without motion artifacts. If the camera or the scanned object moves, the output is distorted.

This challenge could not be addressed with any of the existing 3D sensing methods, be it Time-of-Flight approaches powering ToF area scan and LiDAR devices, or triangulation-based technologies, among them profilometry, photogrammetry, stereo vision, or structured light systems. Though each of these methods excels in some aspects, they also have their limitations, which makes each technology suitable for different kinds of applications and customer needs. When it comes to 3D scanning in motion, however, none of the approaches can provide satisfactory results.

Time-of-Flight systems, for instance, are very fast and can offer almost real-time processing, but this is at the cost of resolution. Structured light systems, on the other hand, can achieve a submillimeter resolution but the scene and/or the camera must not move during the acquisition process, which slows down the whole scanning process and in the end also the cycle time. Therefore, for each application that required 3D scanning of objects in motion, customers needed to decide between high quality or fast speed.

A Novel Approach to 3D Scanning in Motion

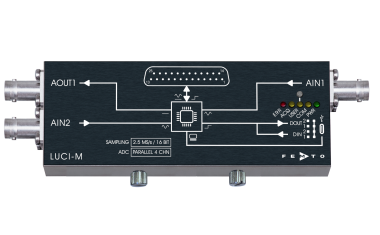

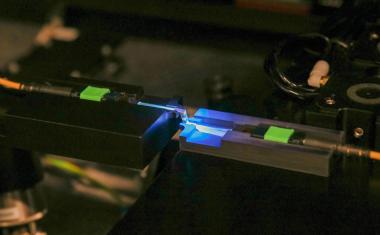

Photoneo, developer of 3D machine vision systems and intelligent automation solutions, came up with a completely different, novel approach to 3D area scanning of dynamic scenes. The company calls the technology "Parallel Structured Light". The technology projects one sweep of laser onto the scene and then produces structured light patterns on the sensor side.

For this, Photoneo uses a special, proprietary CMOS sensor with a unique multi-tap shutter with a “mosaic” pixel pattern. Each pixel in the sensor can be individually modulated by a unique shutter signal (pixel-mosaic code) to produce a specific structured light pattern. This means that in contrast to the structured light systems, which modulate pixels in the projection field, a Parallel Structured Light system modulates pixels directly in the sensor.

The result of this approach is the ability to capture (or construct) multiple structured light patterns simultaneously, in a single exposure window. This enables 3D area scanning of moving objects without motion artifacts.

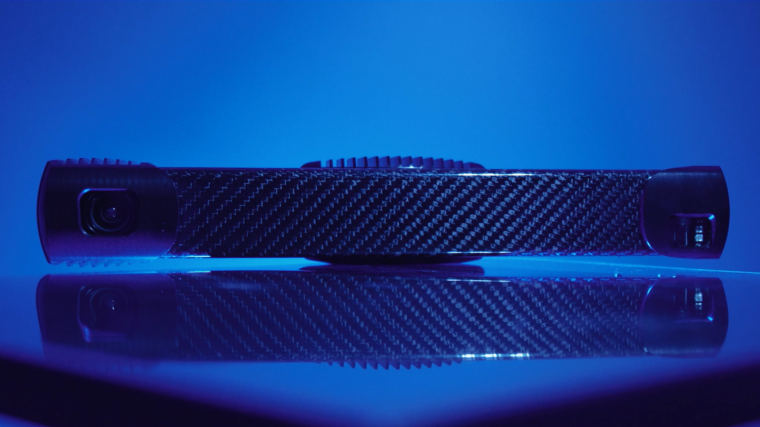

A 3D Camera Powered by the Parallel Structured Light

The unique approach to 3D scanning has been recognized with multiple awards. The technology is implemented in the Photoneo 3D camera MotionCam-3D, which can capture objects moving up to 144 kilometers per hour and provide a point cloud resolution of 0.9 Mpx and an accuracy of 300-1,250 μm across the different models. The camera can also be used for scanning static scenes, in which case it can even achieve a higher resolution and accuracy – up to 2 Mpx and 150-900 μm, respectively.

Solving the Challenges of Vision-Guided Robotics

3D scanning in motion has been a major challenge for vision-guided robotics as the ever-persistent trade-off between quality and speed seemed to be an insoluble problem. By equipping robots with vision that excels on both fronts, automation can pick up steam even faster and spread in new directions.

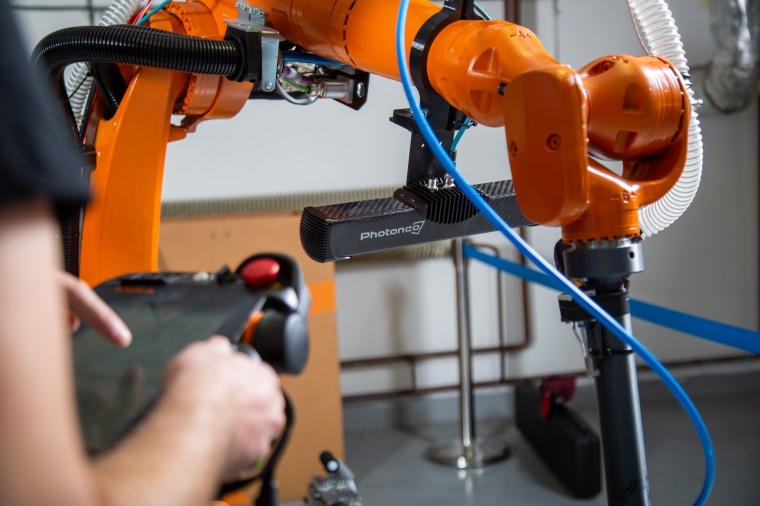

A large space opens up for applications with hand-eye coordination – that is where a camera is directly mounted onto the robotic arm. Traditionally, the robot needed to stop to trigger a scan and then wait during the acquisition process. If an application required multiple scans from several perspectives, the cycle time was prolonged even more. The Parallel Structured Light technology enables the capture of the target object even while the robotic arm to which the camera is attached moves, which means that scans can be acquired during constant movement of the robotic arm or the object without needing to make any pauses.

Collaborative robotics is another sector that was significantly limited by traditional methods. Applications deploying collaborative robots usually involve a human worker and a robot working together – when the worker holds an object, the robot needs to recognize and localize the object in the worker’s hands to take it or perform another task with it. Because the human hand is not static, the robot needs to be equipped with a vision system that is insusceptible to vibrations and movement.

Another large area of applications comprises the scanning of objects of different heights that are placed on a moving conveyor belt, for instance. The Parallel Structured Light technology enables the capture of differently sized objects that are constantly moving while being resistant to any kind of vibrations. These applications may also involve “dimensioning” used in logistics, wood processing, and other sectors, where the 3D vision system measures the objects’ exact dimensions or bounding boxes.

Other applications that may greatly benefit from 3D area scanning in motion include 100 percent quality control and inspection (of both small objects as well as large spaces), the recognition, sorting, and volume measurement of food and organic items such as corn passing on a conveyor belt, a large field scanning for phenotyping purposes where the vehicle to which a 3D camera is attached does not need to stop, or subject monitoring and feature recognition for checking the health condition of animals.

These are only a fraction of instances that demonstrate the critical importance of 3D area scanning in motion. Industry 4.0 pushes through an increasing number of processes and applications and machine vision is an indispensable part of this trend. Now that robots can really “see” and “understand”, no robotic task should be unfeasible. However, it is important to bear in mind that the objective of robotics is not to replace humans but instead help them with tasks that are physically demanding, hazardous, or monotonous, and to find new ways that enable a more effective human-robot collaboration.

Author

Andrea Pufflerová, PR Specialist

Company

Photoneo s.r.o.Plynárenská 6

82109 Bratislava

Slovakia

most read

Chatbots for Dead, Endangered, and Extinct Languages

Possibilities and Limitations of Generative AI for Continuing Education.

The Rise of Photonic and Neuromorphic Computing: A New Era for AI Hardware

Computer Architectures for future data processing

“New technologies always start in R&D”

Kolja Haberland, CTO at Laytec, talks about the early years of the company and explains how they managed to grow from a three-person operation to a globally active medium-sized enterprise.

Could the global industrial automation market face another supply chain crunch?

In 2025, industrial automation OEMs faced uncertainty due to a US-China trade policy shift, delaying orders.

Active Alignment in Assembly and Connection Technology

Reliable manufacturing processes are essential for the assembly and connection technology of photonics systems. Common coupling methods of Active Alignment during assembly are described here.