“There is a huge drive to push AI capability to the edge”

Efinix has recently launched a mid-range FPGA. With this, the company fills the gap between low-cost-low-performance devices and very expensive high-performance ones. To mark the occasion, inspect spoke with Mark Oliver, vice president of marketing and business development, who also took the opportunity to discuss current trends. But the topic is also how Efinix is trying to enable camera developers to achieve a faster time-to-market.

inspect: Recently, Efinix has launched the FPGA Ti180. What are its key features?

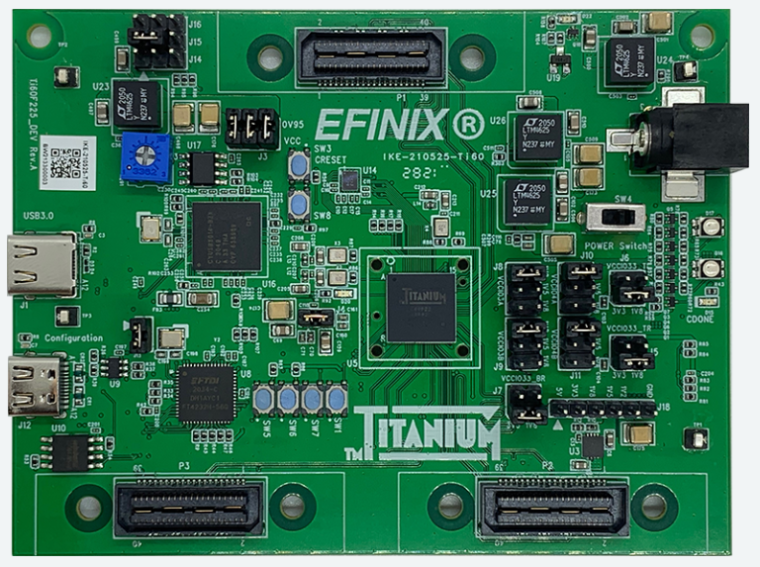

Mark Oliver: Yes indeed, we recently rolled out the Titanium Ti180 and we are now sampling early customers with silicon. The FPGA is the latest member of the Titanium family and is fabricated in the same 16 nm process node. It has 180K logic elements but thanks to the efficient Quantum Compute Fabric, retains a small, low power footprint. It has an embedded LPDDR4/4X interface for high-speed connectivity to external memory as well as high speed 2.5G MIPI interfaces for connectivity with the latest camera sensors. It features 13 Mbits of embedded memory as well as 640 DSP blocks. It shares the same security features as the other Titanium designs making it a good fit for edge applications.

inspect: Why does it make sense to supplement the product range of FPGAs with a mid-range model?

Oliver: We are seeing huge demand for mid-range FPGA devices. It is becoming increasingly clear that Moore’s law is slowing down and it is becoming prohibitively expensive to produce custom silicon for all but the highest volume applications. Designer are looking for alternatives that are cost effective and deliver fast time to market. Efinix FPGAs deliver just that in a dense and efficient platform. Using Efinix FPGAs, designers can innovate rapidly in a configurable fabric delivering application that run at hardware speed with low power consumption.

Once the design is ready, the cost-effective nature of the Efinix devices means that the application can be taken to high volume production using the same devices that were used in development in the lab. That makes for very fast time to market with zero risk and NRE.

inspect: For which applications is it primarily suitable?

Oliver: We have customers designing Titanium family devices into just about every application you can think of. I have to say that with the high speed MIPI interface and the possibility to have a large frame buffer in external LPDDR4, it is a particularly good fit for smart camera designs. We see ongoing designs for industrial automation cameras and cameras with embedded AI as well as the more traditional surveillance and thermal applications.

inspect: Which industries and applications will the already planned future mid-range FPGAs cover?

Oliver: One of the clear trends we see in the market right now is the drive towards edge compute. There is a huge desire to place compute next to where the data is generated and where it has context. These edge applications are a great fit for Efinix FPGAs. They are characterized by constrained space and power yet high compute performance requirements. As vision and Artificial intelligence are increasingly finding application at the edge the compute requirements are increased even more driving the need for a parallel approach that is only achievable with custom silicon or FPGA. With the price of custom silicon solutions exploding, FPGAs are coming into their own. We see a huge adoption of Efinix products at the edge where increasing amounts of data need to be processed in real time.

These applications are not confined to the more obvious edge applications such as smart cities and automotive, but also extend into areas such as AR/VR for both consumer and industrial applications. Here, many different sensors need to be aggregated in real time to extract real time intelligence. This is a trend that is not easily satisfied by conventional processing techniques and is becoming prohibitively expensive for custom solutions. We see FPGAs expanding beyond their traditional role to become self-contained and highly parallel edge compute platforms.

inspect: The RISC-V-based System on Chip (SOC) is intended to accelerate the development time of individual image processing systems. Where exactly are the advantages for developers?

Oliver: The advantage of a RISC-V approach is that the application can be developed and iterated in software on the RISC-V very rapidly. Once hot spots in the code are identified they can be ported to the FPGA hardware either as accelerators or using the RISC-V custom instruction capability. That is a huge benefit and speeds time to market. Talking to our customers though they were still spending time designing the hardware accelerators and all of the interface logic to hook them up. We decided to help that process by defining a standard way of interfacing to an accelerator block and producing system on chip templates that have all those interfaces already pre-designed.

In that way, the designer has to simply code the tiny core of the accelerator and everything else is delivered pre-made. We took this approach one step further and produced a complete reference design for a vision application with accelerator “sockets” left blank for the designers to innovate and differentiate their applications. The result for the customer is the ability to dynamically control hardware / software partitioning and then very rapidly take a design from concept to fully functional product operating with the desired performance.

inspect: The Efinix tiny ML platform is said to enable FPGA performance with low power consumption. What exactly does this platform include?

Oliver: When you look at how the AI market is evolving, there is a huge drive to push AI capability to the edge. The open-source community has made great efforts in delivering standard tool flows and now tools like Tensorflow Lite that make AI available to microcontroller class devices at the edge. Unfortunately, microcontrollers do not normally have the performance needed to run AI at the required performance points. One of the very exciting capabilities of our RISC-V core is the ability to implement custom instructions. We decided that we would put together a library of custom instructions that take the fundamental operators defined by Tensorflow Lite and accelerate them on the RISC-V.

The resulting Tiny ML Platform can take quantized models and run them at hardware speed in the accelerated RISC-V core. This delivers the required performance for AI at the edge while retaining the small footprint and low power required in these applications. In the spirit of open source, we are making the Tiny ML platform available to the community through our Github page free of charge.

inspect: In which applications does it show its strengths?

Oliver: The Tiny ML platform shows its strength wherever AI is deployed in environments where compute resources are limited. Typically we think of these kinds of applications being vision systems for things like human presence detection. It is by no mean limited to those types of applications. AI is finding utility in a broad diversity of applications at the edge. Anywhere large amounts of data need to be processed for anomalies or where some form of value can be locally extracted from the data, AI is starting to be applied. The Tiny ML Platform makes this possible using a highly flexible FPGA platform in a small footprint and consuming very little power.

inspect: On the one hand, Efinix makes use of open-source software by offering a SOC based on RISC-V, and at the same time it brings the Tiny ML platform into the open-source community. What is the importance of open source for Efinix?

Oliver: We are very supportive of open-source initiatives. You only have to look at the success of RISC-V and the diversity of tools available for AI development to see how a community working together can achieve so much more than small teams developing in isolation. We hope that by producing reference designs for SOCs, libraries of accelerators for AI, standardizing interfaces for FPGA acceleration and making all of those available for the open-source community we will be able to stimulate an eco-system of developers that, in turn will make their works public. Where it makes sense, we like to contribute what we can to the community in the hope that it accelerates someone else’s development.

That said, clearly there are things that we work on internally that we believe deliver the compelling advantage that is the Efinix FPGAs and that information we don’t share. Where possible though, we like to contribute what we can to the bigger community.

inspect: What innovations can inspect readers expect next from Efinix?

Oliver: Ah well for that I’m afraid you are going to have to watch us going forward. Suffice to say though that we have much more innovation to do in the Titanium product line and some very exciting ideas in that area. Readers will have seen that we are passionate about making FPGA products accessible. Whether it be through embedded RISC-V processors, standardized acceleration block or SOC reference designs, we believe that if we can do the heavy lifting for the end designer then that speeds time to market for them and stimulates a more vibrant eco system in the community. You should expect to see us continue to drive in that direct as we go forward.

Company

Efinix, Inc.20400 Stevens Creek Boulevard, Suite 200

CA 95014 Cupertino

US

most read

Evaluating a Short Distance OCR Setup Using High Resolution Optics and Deep Learning

A high resolution imaging setup was evaluated to determine how well small characters can be captured and interpreted at close range under typical industrial conditions.

“Cybersecurity has become a basic requirement for industry”

Security expert Thomas Hopfner from software manufacturer MVTec explains why networked production environments are a preferred target for attacks.

Spectronet Collaboration Conference 2025

International Conference for Photonics and Machine Vision

High-tech Analysis of Human Movement

The mission of a US institute isn’t just to capture high-speed movement – it’s to understand whether that movement actually maximizes performance while minimizing the risk of injury.

Efficient Food Manufacturing Enabled by Prism Cameras

Powerful machine vision systems play a crucial role in optical quality control in the food industry. James Cameron, Sales Director EMEA at JAI, explains the requirements and solutions for the food industry.