Why not to Make the Lens an Afterthought in an Embedded AI Vision System

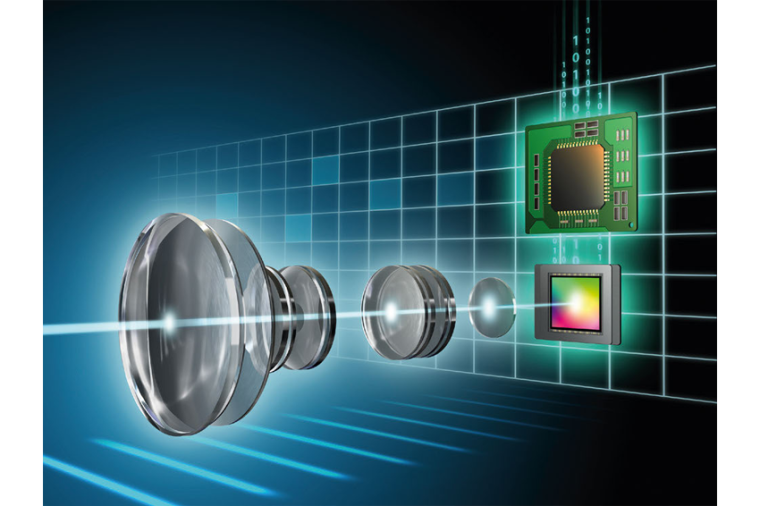

There is a variety of factors that drive image quality. Making the right choice across the range will help to simplify the challenges and the complexity of AI systems and increase imaging performance along the way.

Developing a machine vision or embedded AI systems and scaling them for deployment are already challenging tasks. Converging these to an integrated Embedded AI Vision System can keep scores of developers and engineers busy for quite some time. The selection of relevant sub-components has a significant impact on the engineering challenge and the final solution's complexity, performance, physical size, and power consumption. Regarding the optical path of an Embedded AI Vision System, making the right lens selection is a critical milestone and should not be seen as an afterthought, since the lens’ performance and characteristics impact almost every aspect of the downstream vision chain. The right lens choice can positively impact the required AI algorithm complexity and final system performance, whereas the wrong lens choice can hamstring subsequent development.

Choosing the Right Lens

If applied AI for embedded vision aims to replicate human sensing and understanding, then the optical stack plays a significant role in achieving a human-like vision for any camera-based application. However, carefully selecting the right lens will pay huge dividends whether the intended application is in automotive, security, medical, robotics, or any other possible implementations when transitioning from the lab to the real world. For systems that will be deployed in an environment with low or changing light, or are exposed to a wide temperature range, the challenges to delivering consistent optical performance increase dramatically. A lens must excel along multiple vectors to support real-time or post-processing using AI algorithms.

Choosing the right lens is a critical step in system-level optimization and setting a roadmap to achieving desired outcomes. From our experiences across industries, applications, and clients, we found the following 1st order lens parameters to be critically important:

- MTF – Modulation Transfer Function is a standardized way to describe an optical system regarding sharpness and contrast and is a key performance indicator when comparing lenses. While commonly used to judge “how good” a lens is, it is not the only, and sometimes not even the best metric for determining what will work best in a given use-case.

- F/# – Essentially, the amount of light the lens lets in for a given focal length. The physical aperture, or “Iris” of an optical system defines the total light throughput and directly impacts the lens' ability to produce the required contrast and Depth of Field.

- Distortion – The concept of distortion describes how a lens maps a shape in object space to the image plane. Distortion must be referenced to something, which is sometimes called the “projection”, or may be referred to as Rectilinear, F-tan, or F-theta distortion.

- Relative Illumination (RI) – Is a normalized %-value that represents the illumination of any field point relative to the point of maximum illumination, which is typically on-axis. A high RI value means “flat” illumination across the image plane, whereas a low RI may introduce dark corners or edges.

- Dynamic Range – Quantifies a system’s ability to image high lights and dark shadows in a scene adequately, IE, a wide range of lighting values or conditions in the same image without being saturated or too dark.

Hyperspectral Lenses

As listed previously, MTF or the Module Transfer Function is a standardized way of comparing the optical performance of different imaging systems. For AI algorithm-based systems, it is generally advisable to select lenses that have a fairly consistent MTF across the FOV (Field of View) and show a stable MTF behavior over temperature. The intended wavelength spectrum, which depending on the application, typically include visible (VIS) and/or near infra-red (IR), also has to be taken into consideration when comparing MTF performance. A lens that checks off all these boxes is a fully athermalized lens called hyperspectral, RGB-IR or Day/Night lens. Such a lens requires not only a deep design experience but also an in-depth understanding of material properties, advanced coating Know-How, and a manufacturing process expertise that has been optimized and advanced over decades.

Not all applications require such an advanced optical system, and it will be for the application domain expert to decide which component and how far to optimize the system. However, everything that can be optimized at the lens level “at the speed of light” reduces processing time and power later, possibly resulting in smaller and more energy-efficient computing systems. It is also much easier to start with good lens performance, rather than recreate “missing” data to compensate for poor optical performance since it is almost impossible to process enough to make up for lost data at the lens level (the often-cited concept of garbage in, garbage out applies here as well.)

Depth of Field

Other optical performance parameters such as the F/# and Relative Illumination (RI) also contribute to the consistency of imaging quality that any AI algorithm must deal with. Unfortunately, there is no “one size fits all.” For example, the system architect has to decide whether to optimize a system for low-light performance with a low F/# or improve the Depth of Field (DoF), which defines the range from the near to the far object distance that is determined to be in focus, with a higher F/#. In this example, a high RI may contribute to a well-balanced system where the edge illumination would tolerate some “stopping down” (increasing the F/#) to increase DoF. Lens selection can quickly become a multi-dimensional optimization with various trade-offs.

Distortion

Distortion is one of the lens specs that software developers might often like to simply make go-away since a high and consistent number of pixels per degrees (px/deg) across the entire FOV would be preferred. But since distortion is unavoidable in many cases, Sunex has found ways to manipulate a lens’ distortion profile to align best with and support the algorithm-specific requirements. Our Tailored Distortion expertise has often been applied to SuperFisheye lenses to correct barrel distortion of large FOV lenses, providing more px/deg at the field edges, while conversely the initial statement of striving to achieve a human-like vision has led to the development of FOVEA distortion lenses that mimic the human eye by increasing the px/deg density in the center while at the same time maintaining a wide field of view peripheral vision.

Dynamic Lens Ranges

Not just the 1st order lens design parameters, but also a combination of lens design considerations, coating choices, and surface treatment, contribute to the dynamic range of a lens, which can significantly affect the performance in extreme light situations. The dynamic range of a lens or system is defined as the ratio of the largest non-saturating input signal to the smallest detectable input signal. AI systems running on mobile autonomous systems, such as a delivery vehicle driving from broad daylight into a tunnel (or out), is an authentic example that benefits from a lens with an excellent dynamic range to support consistent results. An agriculture harvesting machine that, on the return leg, drives into the setting sun or stationary systems such as exterior smart infrastructure or security cameras that have to deal with passing light sources such as vehicles or the sun are further applications in need of a lens with high dynamic range. Many customers ask what dynamic range really means for the lens itself, since it is the imager (CMOS, CCD, etc), which is detecting the light, not the lens. The answer is that the lens must be very good in terms of stray-light, glare and ghosting. For a low dynamic range sensor, these image artifacts can be ignored because the sensor will not pick them up. However, in a HDR/WDR sensor, at best, stray light reduces the signal-to-noise level (contrast) of the image and at worst the artifacts themselves become apparent and may be interpreted as another light source, such as an oncoming vehicle, or could obscure real images.

Simplifying AI with the Right Lens

Who knows where the capabilities of embedded AI-vision systems will lead us in the future; can it ignore dust or dirt on the camera, overcome insufficient lighting, or potentially compensate for MTF changes due to thermal shift? While AI may eventually allow for more relaxed lens requirements like a human brain does, there is still no substitute for good image quality and we can clearly see the benefits and potential that an “AI-optimized” lens can deliver to the overall system reliability and consistent image quality, especially if the AI-based vision system is situated in varying environmental conditions. Needless to say, size constraints and piece price also factor in when designing the right system, and there is no need to “over-engineer” the final solution. However, the previously mentioned factors that drive image quality, when done right, have the potential to simplify the AI system complexity, optimize algorithms, reduce system latencies and power consumption, while enhancing imaging performance.

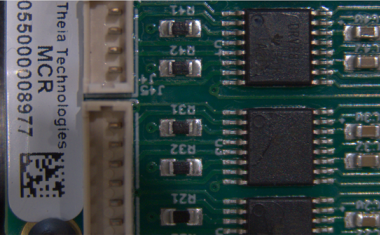

Sunex already has many lens designs that combine low F/#, high Relative Illumination (RI), high dynamic range (HDR), high MTF across the field, and a broad wavelength spectrum for consistent performance for many industries and applications. Often the initial engagement with their clients is to select possible options based on their existing portfolio of lenses to get real-world feedback on what optical performance is required in the real use-case. Based on this feedback, they review opportunities to optimize and adapt and existing lens or may jointly decide to pursue a purpose-built, custom lens design that matches the application and requirements. For many of the company’s clients they also offer greater vertical integration by designing and manufacturing the entire sensor board, including fully automated active lens/sensor alignment. Sunex aims to collaborate to develop a system that can meet the expected performance, for an acceptable price, in the time frame needed.

Author

Ingo Foldvari

Director of Business Development

Company

Sunex, Inc.3160 Lionshead Ave Suite B

CA 92010 Carlsbad

US

most read

Precise Down to the Nanometer

Interferometry is crucial in semiconductor, packaging, and medical industries. New white‑light systems offer nanometer precision, high measurement rates, and are ideal for inline industrial use.

Spectronet Collaboration Conference 2025

International Conference for Photonics and Machine Vision

Filling Inspection in Turbo Mode

When a beverage can fizzes perfectly, high-tech is behind it. Two companies have developed a reliable 3D inspection solution to make this possible.

Efficient Food Manufacturing Enabled by Prism Cameras

Powerful machine vision systems play a crucial role in optical quality control in the food industry. James Cameron, Sales Director EMEA at JAI, explains the requirements and solutions for the food industry.

Evaluating a Short Distance OCR Setup Using High Resolution Optics and Deep Learning

A high resolution imaging setup was evaluated to determine how well small characters can be captured and interpreted at close range under typical industrial conditions.